When I was talking to another writer on Discord, I realized that I tend to be somewhat vague and off-handed when I talk about my writing process, and assume people already know what I’m talking about, so I’m going to walk through the whole process here for transparency’s sake. This process includes the use of AI software for transcription and cleanup of dictated content, but it doesn’t start or end there, so if you are interested in that part, please, bear with me until I get there.

-Initial brainstorming: this usually happens in the notebook of the moment, whether Moleskines with fancy covers, or Chilterns with even fancier covers but less nice paper, or rickety homemade notebooks that split the difference (IE, moderately fancy covers, colored printer paper for pages, iffy craftmanship). I will sometimes go and debate plot points, real world details or genre tropes with AI, but that tends to feel kind of sterile, and usually I only go there once I feel like I need to impose some order on the chaos of my notes. It can take a while for my ideas to get “off of paper.” Both the Star Master duology and the space regency WIP took years to get to the point where I was comfortable actually writing on them.

-Scrivener file: Starting a Scrivener file for a project is no guarantee of completion, but it means I’m somewhat serious about that project. I use a modified version of one of Jamie Gold’s Scrivener templates. Generally I put a brief mission statement above the Manuscript area, and what I already know about the characters and setting under Research, plus whatever images or other materials seem relevant.

-Actual writing: this can be fingers on keyboard, or longhand writing in a notebook which then gets read out loud and turned into dictation, or it can start as dictation. I feel like typing is better for discovery writing, especially in the right mood, while dictation is better for situations where I have some idea of where I’m going but not enough motivation to sit and type. Let’s focus on the dictation process.

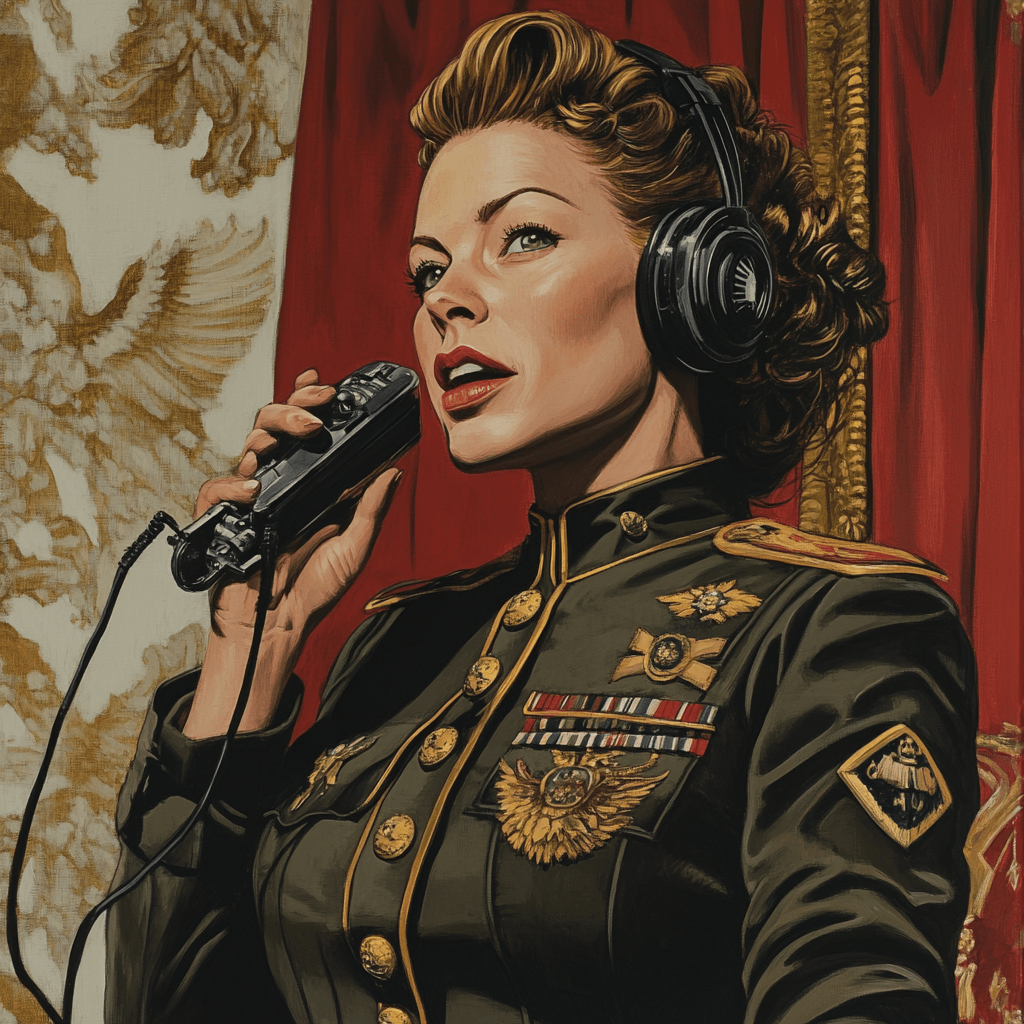

–I use this phone app for the initial recording. It’s free, people who care more about audio quality than I do seem to think well of it, and it has a cute interface themed to an old-school tape recorder, which you can customize in exchange for watching ads. But you do you. All this process I’m describing really requires is some method of recording mp3/m4a/etc files on the move and some way of getting those files to your primary computer. Whatever phone app you like will work. Dictation is normally something that I do while moving. Maybe once a week or so, things line up in such a way that I can dictate on the commute home.

–More recently I’ve tried doing it on the magnetic rowing machine, with fairly decent results, because the magnetic rowers are quiet. Sometimes I walk around and talk into the phone app, although that’s unusual for me. Sometimes, if I’m working from home and it’s a slow day, I’ll dictate a bit at my WFH office. Don’t worry about punctuation. Worry as much or as little as you feel like about names. The workflow I have going on is for two projects (Hunter Healer King 3, and the space regency) where most things have fairly standard European names with just a few outliers. When I was working on the Star Master books and using a more primitive form of dictation, the characters all had in-universe names plus dictation code names for finding and replacing. (For instance, Jetay was Adam, Lanati was Helen, and Shenti was Imogene). If I tried my hand at, say, epic fantasy, I might have to go back to the dictation code name approach.

-Transcription: Whisper AI is part of the OpenAI stable, but I first fell in love with it through a now defunct website intended to help people fansub movies and anime series. Eventually, I learned just barely enough python to set up a local instance of whisper inside of python, and built a code to get it to transcribe to text files, using three different web articles and a consultation with Claude AI. The hardest part of this process was installing something called “ffmpeg” (required to run whisper in python), which refused to install manually and I ended up have to use an installation helper called chocolatey. Another dependency was pytorch, which I found less of a hassle to set up. This article has a pretty good overview of the installation process, much better than my description here. Transcription itself is fairly quick. I open up the text file where I saved the code I made, update it to reflect the name of the audio file, and copy/paste into the python command line. Even on my ancient home computer it takes less time for Whisper to go through the audio file and output a txt file with transcript than it would for me to listen to it.

What I like about Whisper is that it will at least take a stab at guessing punctation based on your pauses and the words you are saying, and it (IMO) has a decent sense of phonetics. What I don’t like is it handles those moments when I fall silent thinking of what to say next, and there’s only background noise. It tends to treat those as repetitions (sometimes dozens of repetitions) of whatever I last said. So, you get something that has more ellipses than you like, and probably some misspelled names, and maybe the repeated text problem I mentioned, but the end result should be, for a person with a neutral American accent, messy in the same ways that the source speech/audio file was messy. Don’t worry too much about the messiness. We’re going to have that fixed.

-A while back, I found a great set of commands for using the Claude AI chatbot to cleanup transcriptions, and copied the commands over to my blog. They have served me well, and they look like they should be fairly AI-agnostic, but if you’re using Grok or Chat-GPT or Venice or Gemini and you *know* it won’t cooperate with some particular turn of phrase in those commands, go ahead and change it. If you somehow managed to dictate for like 45 minutes or an hour, you may want to split the transcription into two shorter files, to reduce the risk of the AI either trying to summarize unnecessarily or getting confused by what’s going on. If you have some kind of glossary of character names and setting concepts, you can try including it as an additional attachment (besides the transcription file) and referencing the glossary in your commands to the AI. I generally don’t bother, preferring just to correct name misspellings after the fact. This is partly because Claude is designed to *not* remember what you tell it from one chat to the next. I don’t know whether Anthropic’s rationale was privacy concerns or memory/coherence concerns, or concerns about the type of emotional dependency that users sometimes develop towards ChatGPT, but this kind of strategic forgetting is acceptable for my purposes. Once the AI gives me a cleaner transcription in the chatbot, I copy paste that into a sort of “scratch pad” word document where I mess around with my latest writings. If you’d rather just dump it into your main manuscript, go ahead. I do mass find/replace to change the straight apostrophes and quotation marks to curly ones. (In modern versions of Word, you do this by putting the same symbol under “Find” and “Replace.”) Then I fix any names that got messed up. Apparently, I have a bad tendency to pronounce “Haupstadt” as “Holpstad.”

Then I take a closer look at the text. Does it reflect my actual intentions at the time of dictation? Have I had a better idea since then? Did Whisper or Claude mash two sides of a conversation into a single long speech? What’s going on inside the curly brackets, which Claude was commanded to use for stuff it was unsure about? As long as I do this the same day I did the dictation, or at worst a day or two later, I can usually get through this part pretty quickly. What I used to hate about dictation cleanup was the part where I had to fix every comma and install every quotation mark by hand, and now that is done for me.

-Oftentimes, I start a scene with dictation and then expand or extend it with typing, which is how something that was maybe 300-400 words when I finished going over Claude’s version, turns into 800-1000. Description, for instance, is not something I’m good at doing during the dictation phase, so that ends up being put down in the typing phase. I may ask Claude to continue a scene it knows from the transcription process, but this usually doesn’t result in anything more useful than giving me an idea of reader expectations, and as I’ve said in the fanficcing essay, I’ve never seen an AI continuation of my original works that I wanted to use.

So, that’s the current drafting process chez Jaglion Press. If you have questions, please let me know!

3 thoughts on “State of the Dictator, 2025”